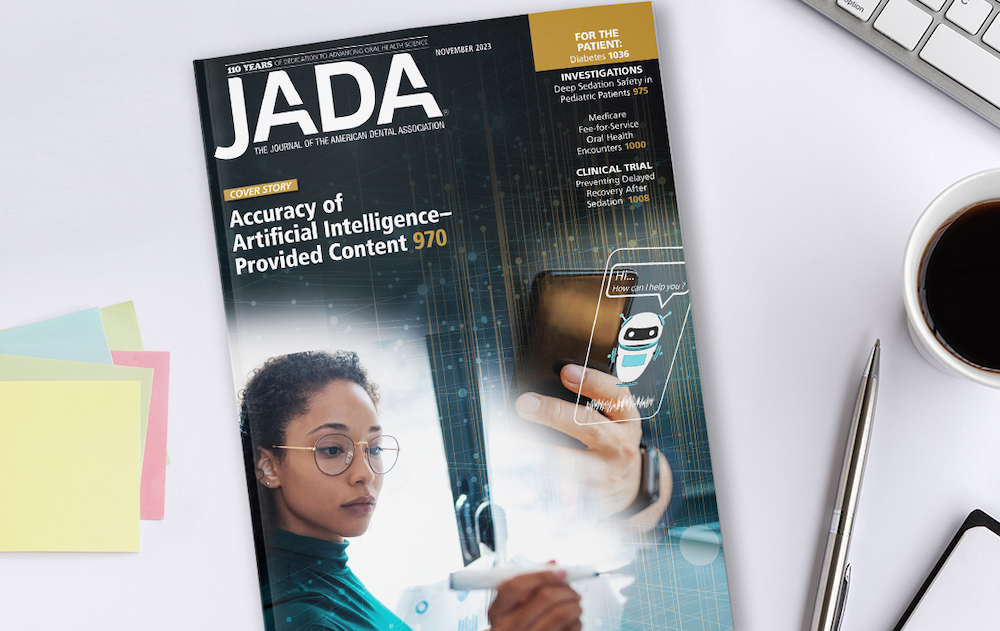

November JADA evaluates ChatGPT as educational resource for dental students

Authors say chatbot may supplement existing learning programs

An article published in the November issue of The Journal of the American Dental Association evaluated ChatGPT’s ability to output accurate dental content as an educational resource for dental students.

The cover story, “The Performance of Artificial Intelligence Language Models in Board-Style Dental Knowledge Assessment: A Preliminary Study on ChatGPT,” evaluated the performance of ChatGPT3.5 and ChatGPT4 on a board-style multiple-choice dental knowledge assessment.

Only ChatGPT4 displayed a competent ability to output accurate dental content, according to the study. On average, ChatGPT3.5 answered 61.3% of the questions correctly on the assessment, while ChatGPT4 answered 76.9%.

“Future research should evaluate the proficiency of emerging models of ChatGPT in dentistry to assess its evolving role in dental education,” the authors said in the study. “Although ChatGPT showed an impressive ability to output accurate dental content, our findings should encourage dental students to incorporate ChatGPT to supplement their existing learning program instead of using it as their primary learning resource.”

Other articles in the November issue of JADA discuss deep sedation safety in pediatric patients, Medicare fee-for-service oral health encounters and prevention of delayed recovery after sedation.

Every month, JADA articles are published online at JADA.ADA.org in advance of the print publication. ADA members can access JADA content with their ADA username and password.